fire_rainbow

Power Member

As reviews começam a sair às 14h (hora Portugal).

Sim, acho que é as 15H (Hora Europa Central) cá deve ser ás 14H

Mas a Puget é uma empresa que vende workstations, por isso a review deles não devia de estar abrangida pelo NDA, a malta quer é saber dos FPS

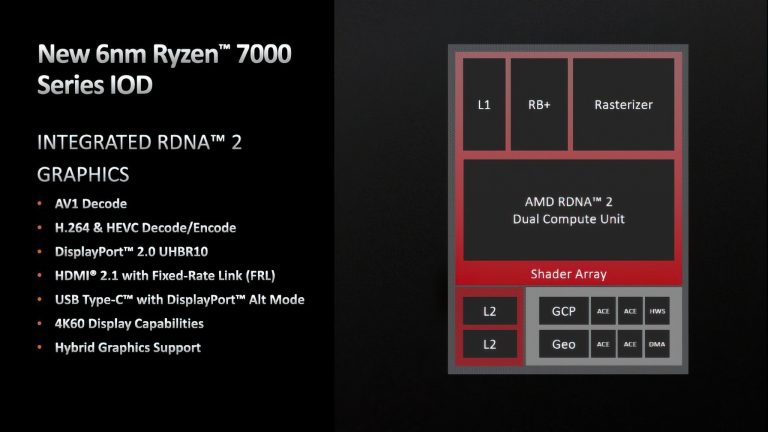

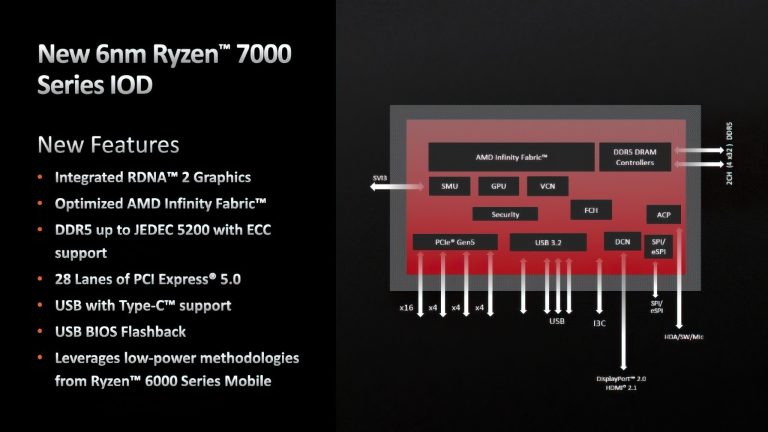

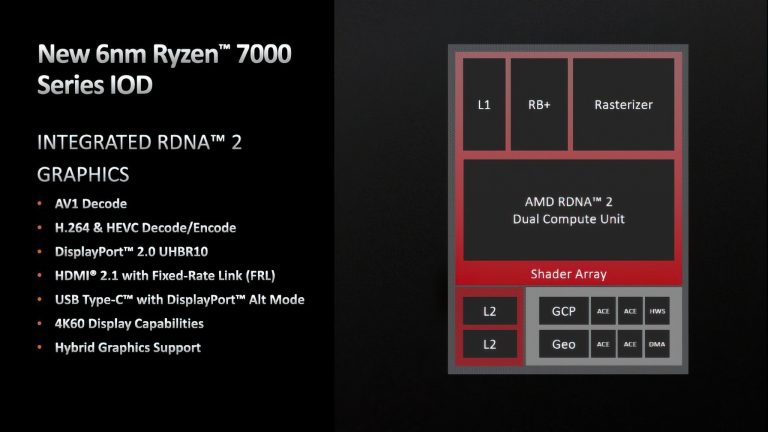

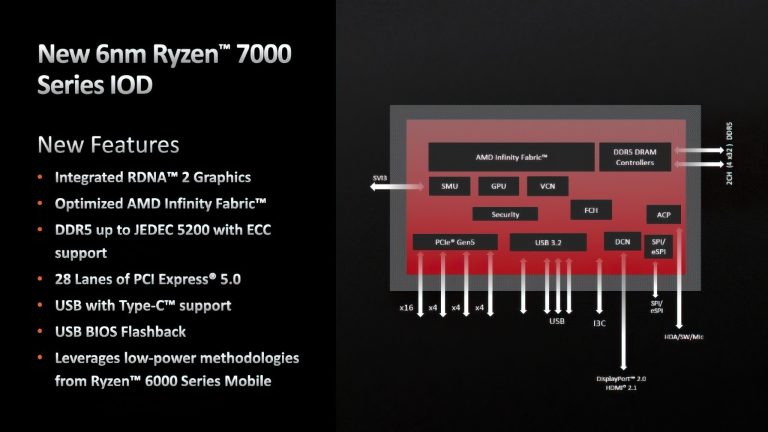

EDIT: entretanto parece que a AMD já divulgou o iGPU no IOdie dos Ryzen 7000

Até este iGPUs suportam Display port 2.0 e as novas RTX 4000 não. Lol

Test Bed and Setup

As per our processor testing policy, we take a premium category motherboard suitable for the socket, and equip the system with a suitable amount of memory running at the manufacturer's maximum supported frequency. This is also typically run at JEDEC subtimings where possible. It is noted that some users are not keen on this policy, stating that sometimes the maximum supported frequency is quite low, or faster memory is available at a similar price, or that the JEDEC speeds can be prohibitive for performance.

While these comments make sense, ultimately very few users apply memory profiles (either XMP or other) as they require interaction with the BIOS, and most users will fall back on JEDEC-supported speeds - this includes home users as well as industry who might want to shave off a cent or two from the cost or stay within the margins set by the manufacturer. Where possible, we will extend out testing to include faster memory modules either at the same time as the review or a later date.

memory benchmarks

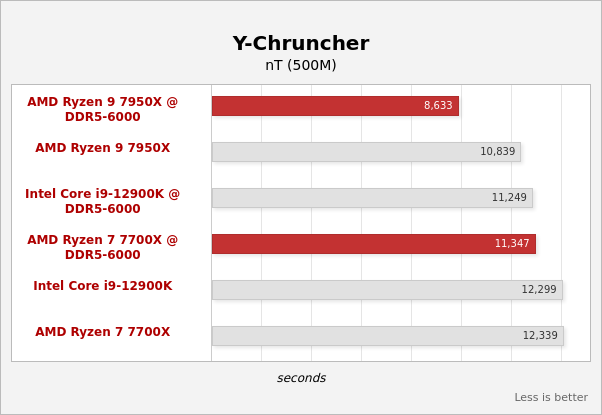

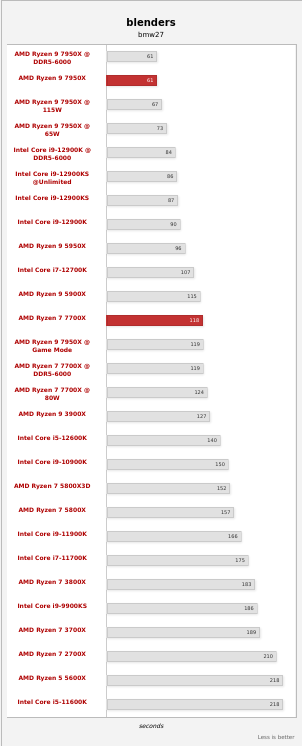

First, let's look at a few synthetic memory benchmarks. We carried out this for the Ryzen 7000 processors without a corresponding marking with DDR5-5200 - for the Core i9-12900K with DDR5-4800. Then we each switched to DDR5-6000, which should be the interesting area for us.

As expected, increasing the memory take and increasing the transfer rate to 6,000 MT/s has a positive effect on the throughput rates - that's to be expected. The Ryzen 7 7700X struggles with the fact that its read throughput is somewhat lower due to the connection of the CCD to the IOD. But it becomes clear here that DDR5-5200 is not the desired memory to be used with the new processors.

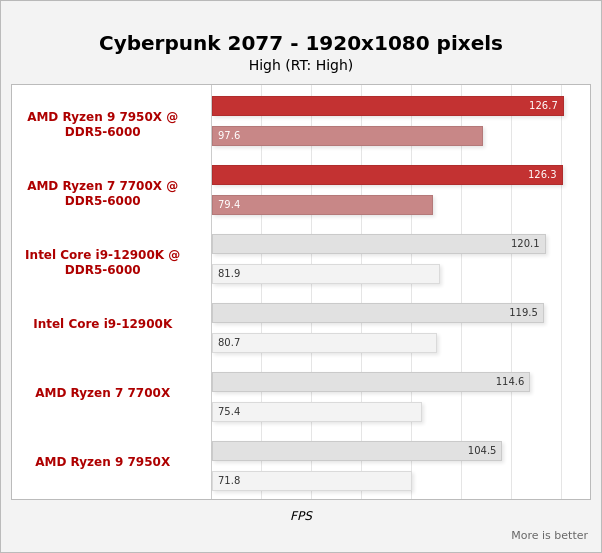

And now some games:

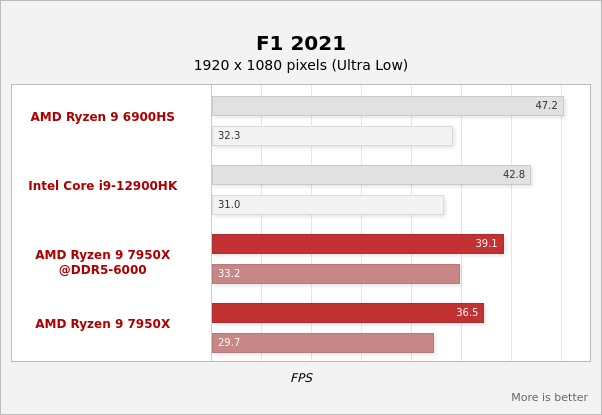

https://www-hardwareluxx-de.transla...sl=de&_x_tr_tl=en&_x_tr_hl=pt-PT&_x_tr_pto=scBoth the Core i9-12900K and the two Ryzen models benefit in some cases extremely from the faster memory. Of course, this also depends a bit on the game and the selected settings, but an FPS plus of 10 to 15% is possible if you are not yet completely at the GPU limit.

We use the following test system configurations to test the processors:

AMD AM5 (Ryzen 7000):

- Gigabyte X670 AORUS Elite AX

- G.Skill Trident Z5 Neo 2x 16GB DDR5-6000 30-38-38-96

- be quiet! Dark Rock Pro 4

- Corsair HX1000 power supply

- GeForce RTX 3090 Ti

Intel LGA1700 (Alder Lake) for DDR5:

- ASUS ROG Maximus Z690 Hero

- Kingston Fury Beast 2x 16GB DDR5-5200 40-39-39-76

- be quiet! Dark Rock Pro 4

- Corsair HX1000 power supply

- GeForce RTX 3090 Ti

Second system for DDR4:

- ASUS TUF Z690-Plus WIFI D4

- Corsair Vengeance 4x 8GB DDR4-3600 18-19-19-39

- be quiet! Dark Rock Pro 4

- Corsair HX1000 power supply

- GeForce RTX 3090 Ti

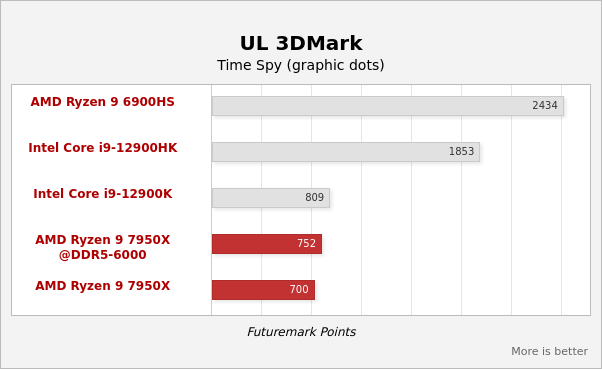

Even if the integrated RDNA 2 GPU should only be used by gamers in exceptional cases, we ran a few benchmarks with it. But first, let's take a look at the GPU-Z screenshot.

Two compute units and therefore 128 shader units do not uproot any trees. The GPU clock is 2,200 MHz. The main memory is used as graphics memory and therefore there is a certain dependency on its speed.

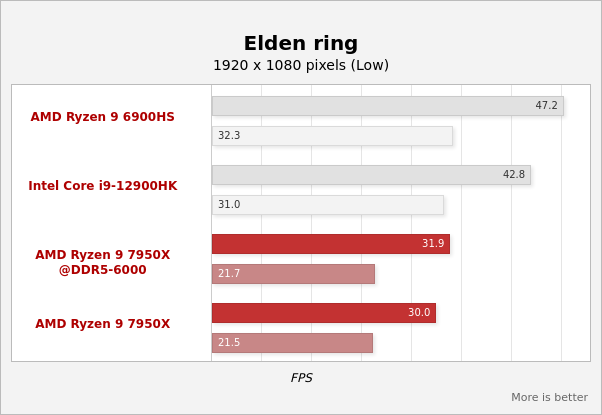

What the technical data have already indicated: The integrated graphics unit of the Ryzen 7000 processors is sufficient for a rudimentary display of 2D and 3D content. Games only achieve adequate FPS at 1080p and below and at low details, if at all. Increasing the memory speed from DDR5-5200 to DDR5-6000 results in a performance increase of about 7%.

The RDNA 2 GPU supports AV1 decoding in 10 and 8 bits. In addition, decoding in VP9, H.265 and H.264 (all also in 10 and 8 bit) is supported. However, encoding is only possible in H.265 and H.264. At least in 1080p and 1440p we were able to decode an AV1 video on YouTube in the Chrome browser, as shown in the following screenshot:

https://www-hardwareluxx-de.transla...sl=de&_x_tr_tl=en&_x_tr_hl=pt-PT&_x_tr_pto=scBut as soon as we switched to a 4K or even 8K video, this was no longer possible or the video jerked, frames were dropped and you certainly don't want to watch a video in this form. Due to the low time budget for this test, we were unable to find out whether the driver was to blame or whether the allocation of the graphics memory was not sufficient. We will look at the topic again.