Zealot

I quit My Job for Folding

Onde se lê "Optimizations" deve-se ler "cheats".

Conclusion

Finally, let's draw the conclusion of everything we found out in this article. First of all, let's have a look at the discovered "optimizations" of the Detonator 45.23 and 52.10/14, but only related to the GeForceFX series.

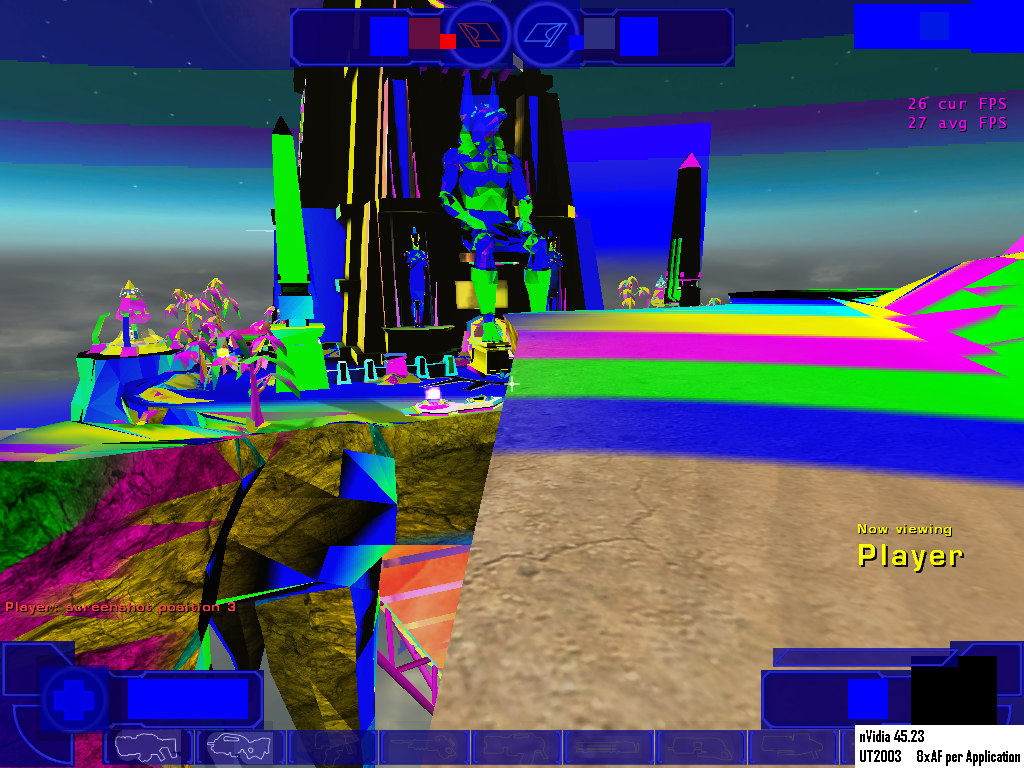

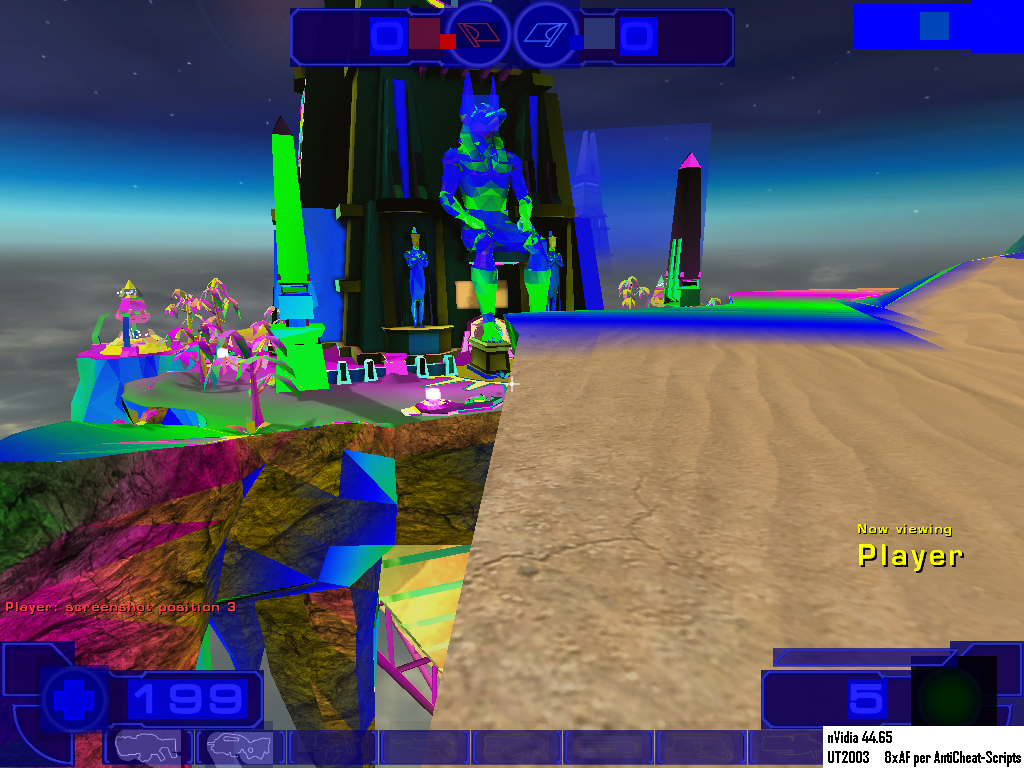

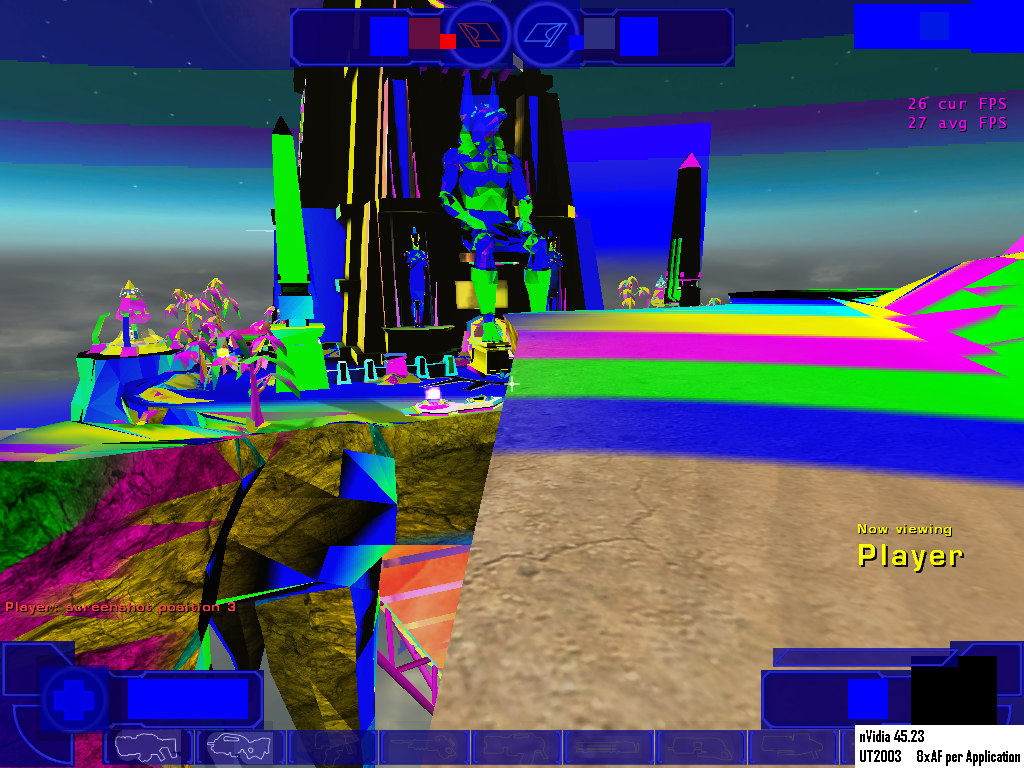

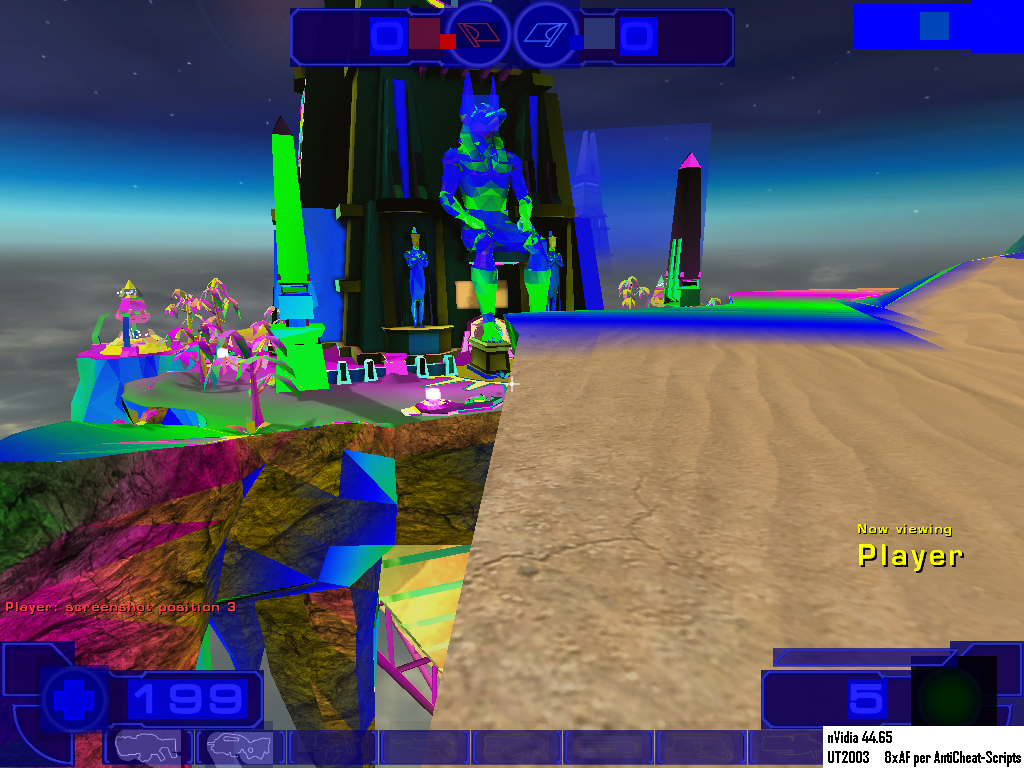

The Detonator 45.23 shows an exemplary filter quality for both OpenGL and Direct3D. However, an application specific "optimization" has been found for Unreal Tournament 2003, which can be deactivated by using the Application mode.

The Detonators 52.10 and 52.14 instead are showing us a lot of "optimizations" for Direct3D filtering, but seemingly neither an application specific "optimization" nor an "optimization" for OpenGL. You could say that in Direct3D, generally all texture stages are filtered by this faked trilinear filter, regardless of the filter setting forced by the control panel or by using the Application mode. In addition to that, there is another "optimization" when using the Control panel mode (not the Application mode), where the texture stages 1 till 7 are only filtered with a 2x anisotropic filter at the best.

On the credit side we’ve got a clearly better Shader performance for both new drivers, but on the debit side we’ve got the already known Unreal-Tournament-2003-"optimization" now for all games which do not have the possibility to set the level of anisotropy themselves and also the fact that the trilinear filter was given up in favor of the faked trilinear filter, regardless of the filter setting forced by the control panel or the settings made by the game.

Because of the new level of "optimizations", we cannot see a real performance increase, apart from the Shader performance. In our opinion, a few percent more performance don’t justify the "optimizations" that were made at all. They are only making sense (if you can call it that way) on closer inspection of the Unreal Tournament 2003 Flyby benchmark. There, you can even double the performance by "optimizing" the filter quality.

And what’s the benefit for the consumer? Almost nothing. The real performance advantage in the game, e.g. Unreal Tournament 2003, is clearly below 10 percent, which is not more than 2-5 fps. The Flyby rates are still theoretical measurements and will remain theoretical measurements, which of course can show quite good the raw performance of a graphic card, but tell absolutely nothing about the real game performance of Unreal Tournament 2003.

(...)

This obviously does not excuse the behavior of nVidia "optimizing" the Detonators 52.10 and 52.14, where now all of a sudden the old "optimization" for Unreal Tournament 2003 applies to all games. The performance gain obtained therefrom is way too small to justify this "optimization", if you take anything into consideration – and at the same time nVidia forces the customer to use their own faked filter for the majority of games. That's because whoever plays a game which has no possibility to set the anisotropic filter on its own has got only one means to set it up, namely by the means of the Control panel, where the user is forced to use the faked trilinear filtering method. With this, nVidia offers its GeforceFX customers no correct trilinear filtering for the majority of currently available games!

(...)

Unfortunately the Application mode isn’t that what it used to be, because the applications (the games) don’t decide over the anisotropic filter themselves, but rather the nVidia driver does, which generally uses the faked trilinear filter, instead of the correct one.

This represents a new stage in the history of driver "optimizing", because nVidia hurts a clear and fixed standard. In contrast to the anisotropic filter, which does not have an exact definition, the trilinear filter is produced based on an exact definition, which nVidia hereby clearly and obviously breaks. If these drivers or a driver with similar filter behaviour should be published officially, than nVidia should not permit themselves to put the trilinear filtering onto the feature checklist of their GeForceFX graphic chip series.

Mas há aqui uma coisa que eu não estou a perceber bem. Afinal o que é o Trilinear filtering concretamente? Para que serve? Qual a diferença entre o trilinear e o bilinear?

E como devo interpretar estas imagens no UT2003?

Conclusion

Finally, let's draw the conclusion of everything we found out in this article. First of all, let's have a look at the discovered "optimizations" of the Detonator 45.23 and 52.10/14, but only related to the GeForceFX series.

The Detonator 45.23 shows an exemplary filter quality for both OpenGL and Direct3D. However, an application specific "optimization" has been found for Unreal Tournament 2003, which can be deactivated by using the Application mode.

The Detonators 52.10 and 52.14 instead are showing us a lot of "optimizations" for Direct3D filtering, but seemingly neither an application specific "optimization" nor an "optimization" for OpenGL. You could say that in Direct3D, generally all texture stages are filtered by this faked trilinear filter, regardless of the filter setting forced by the control panel or by using the Application mode. In addition to that, there is another "optimization" when using the Control panel mode (not the Application mode), where the texture stages 1 till 7 are only filtered with a 2x anisotropic filter at the best.

On the credit side we’ve got a clearly better Shader performance for both new drivers, but on the debit side we’ve got the already known Unreal-Tournament-2003-"optimization" now for all games which do not have the possibility to set the level of anisotropy themselves and also the fact that the trilinear filter was given up in favor of the faked trilinear filter, regardless of the filter setting forced by the control panel or the settings made by the game.

Because of the new level of "optimizations", we cannot see a real performance increase, apart from the Shader performance. In our opinion, a few percent more performance don’t justify the "optimizations" that were made at all. They are only making sense (if you can call it that way) on closer inspection of the Unreal Tournament 2003 Flyby benchmark. There, you can even double the performance by "optimizing" the filter quality.

And what’s the benefit for the consumer? Almost nothing. The real performance advantage in the game, e.g. Unreal Tournament 2003, is clearly below 10 percent, which is not more than 2-5 fps. The Flyby rates are still theoretical measurements and will remain theoretical measurements, which of course can show quite good the raw performance of a graphic card, but tell absolutely nothing about the real game performance of Unreal Tournament 2003.

(...)

This obviously does not excuse the behavior of nVidia "optimizing" the Detonators 52.10 and 52.14, where now all of a sudden the old "optimization" for Unreal Tournament 2003 applies to all games. The performance gain obtained therefrom is way too small to justify this "optimization", if you take anything into consideration – and at the same time nVidia forces the customer to use their own faked filter for the majority of games. That's because whoever plays a game which has no possibility to set the anisotropic filter on its own has got only one means to set it up, namely by the means of the Control panel, where the user is forced to use the faked trilinear filtering method. With this, nVidia offers its GeforceFX customers no correct trilinear filtering for the majority of currently available games!

(...)

Unfortunately the Application mode isn’t that what it used to be, because the applications (the games) don’t decide over the anisotropic filter themselves, but rather the nVidia driver does, which generally uses the faked trilinear filter, instead of the correct one.

This represents a new stage in the history of driver "optimizing", because nVidia hurts a clear and fixed standard. In contrast to the anisotropic filter, which does not have an exact definition, the trilinear filter is produced based on an exact definition, which nVidia hereby clearly and obviously breaks. If these drivers or a driver with similar filter behaviour should be published officially, than nVidia should not permit themselves to put the trilinear filtering onto the feature checklist of their GeForceFX graphic chip series.

Mas há aqui uma coisa que eu não estou a perceber bem. Afinal o que é o Trilinear filtering concretamente? Para que serve? Qual a diferença entre o trilinear e o bilinear?

E como devo interpretar estas imagens no UT2003?